Cat Detection from Scratch

Audience: Engineers who fine-tune models but have never trained one from random weights.

Reading time: ~19 minutes.

In May 2017 I sat down to learn deep learning by doing the most concrete thing I could think of: training a neural network to detect cats in photographs. I had been writing software for twenty-five years at that point. I knew Python. I knew Linux. I had a working sense of linear algebra and a fading memory of multivariable calculus. I had used machine learning libraries the way most engineers had: as a black box that did clever things you didn’t really need to understand.

I wanted to understand. So I rented a GPU, opened a blank Python file, and started typing.

This is an account of what training a neural network from random weights actually looks like underneath the abstractions you already use. If you have fine-tuned a model, called an inference API, or wired up a vector store, the loop in this post is the thing humming at the bottom of all of that. Knowing its shape changes how you read training curves, how you reason about model failures, and how you think about what is and isn’t possible in a given budget.

The model I trained classified handwritten digits, hit a wall, and taught me everything I needed to know about why “just go train one yourself” is a far more loaded sentence than it sounds.

A footnote before we begin. A year or so after I wrote the original version of this study guide, I shared it with a recent University of Washington CS grad who was on my team. He told me it was basically the material from his first-year ML class. I am still not sure whether that was meant to be a critique. Either way, I was happy: landing at “first-year ML curriculum” felt like a reasonable destination for a self-directed side project, and it is roughly the altitude I am aiming to explore here.

A small note on voice. Code blocks, terminal output, and indented quoted passages below are reproduced from the original 2017 study guide. The prose around them is from today, looking back. I use past tense for what actually happened in 2017 on the K80, and present tense for how the code or the math works in any era.

Training vs. inference

The first thing worth getting straight is that training and inference are two completely different workloads that happen to share a model file.

Inference is what you do every time you call a model: a forward pass through the network’s fixed weights, input in, prediction out. It is, computationally, a sequence of matrix multiplies. The weights are read-only. The cost is bounded and predictable.

Training is the part where those weights stop being a model and become a search problem. You start with random numbers in every weight matrix. You show the network an example, see how wrong its answer is, compute how each weight contributed to the wrongness, and nudge every weight in the direction that would have made the answer slightly less wrong. Then you do that several billion times.

This is the thing that’s hidden when you fine-tune through somebody else’s framework. The framework hands you a Trainer object that wraps a forward, a backward, an optimizer step, and a learning rate schedule. The actual mechanics (what the loss looks like as a scalar, how a gradient propagates back through a softmax, what an Adam optimizer is actually doing to your weight tensors) are usually a few abstraction layers down. If you have only ever seen training from above, you’ve seen the dashboard but not the engine.

So: cats. Or, as it turned out, digits.

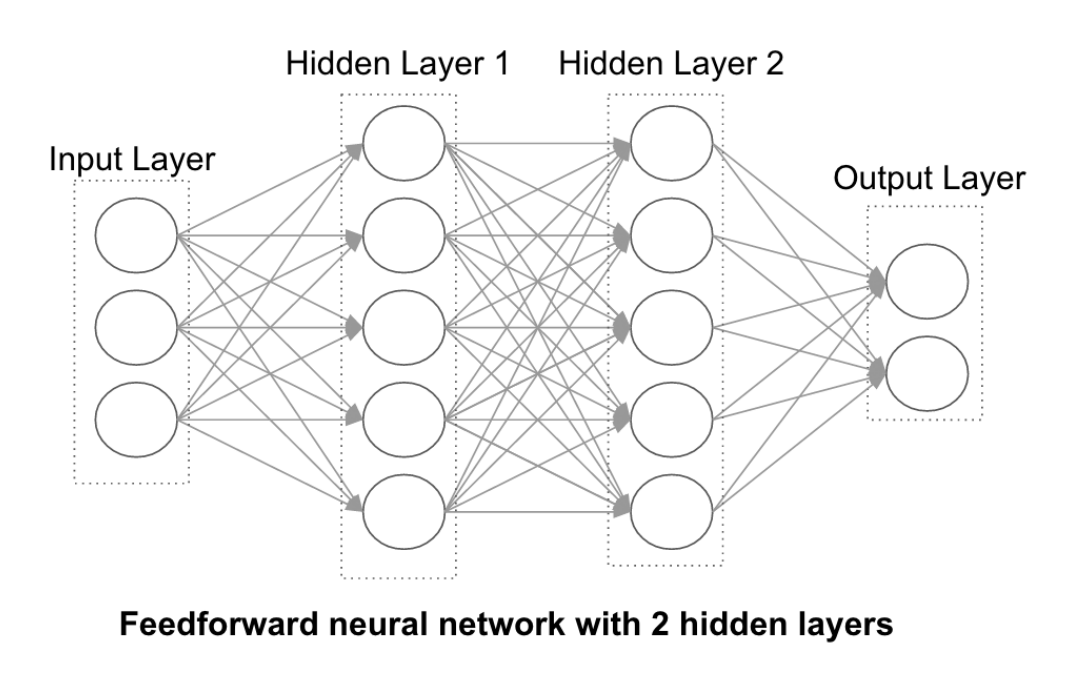

Deep neural networks

A neural network is, structurally, a stack of layers. Each layer takes a vector of numbers (the activations of the previous layer), multiplies it by a matrix of learnable weights, adds a bias vector, and runs the result through a nonlinearity. The output of one layer becomes the input of the next. The first layer sees your raw data; the last layer produces the answer. Everything in between is called a hidden layer, because nothing outside the network ever observes it directly.

The word “deep” in deep learning is just the observation that stacking more hidden layers, then training the whole stack end-to-end, lets the network discover its own feature representations without you handcrafting them. Pre-2012, computer vision practitioners wrote code that extracted edges, corners, color histograms, and SIFT descriptors, and fed those features into a classifier. Post-2012, after AlexNet won ImageNet, you fed raw pixels in and the network figured out the features for you. That shift, from human-designed features to learned representations, is what every “deep learning revolution” headline was actually about.

There are two big training regimes worth naming. Supervised learning uses labeled data: each input comes paired with the correct answer, the network’s prediction is scored against that answer, and the loss signal flows back through the weights. MNIST is supervised. Every digit image has a label from 0 to 9 attached. Almost everything practical you have ever used in production, including most fine-tuned models, was trained this way. Unsupervised learning has no labels; the network’s job is to discover structure (clusters, factors of variation, latent representations) in the data on its own terms. Foundation models like LLMs blur the line. The next-token objective is technically self-supervised, deriving labels from the data itself. The supervised vs. unsupervised vocabulary still matters for how you reason about a training run.

You do not train on all your data. The standard discipline is to split it: roughly 60% for the training set, 20% for a validation set that you use during training to tune hyperparameters and decide when to stop, and 20% for a held-out test set that you only look at once, at the very end, to honestly estimate how the model will do in the wild. (The older convention was 80/20 train/test with no separate validation set; modern practice prefers the three-way split.) The reason for any of this is overfitting. It is trivially easy to produce a model that memorizes its training data perfectly and then collapses completely on anything it has not seen before. You will not know this is happening unless you have data the model was never allowed to look at during training. The test set is the only honest measurement you have. If you peek at it, tune against it, or train on it, you have given yourself nothing but vibes.

Why I didn’t just download a cat detector

Here is something a 2026 reader will find absurd. In 2017, “just download a state-of-the-art image classifier” was not a real option in the way it is now. ResNet existed (the paper had landed at the end of 2015), but I didn’t know about it. ImageNet existed. The pre-trained-model ecosystem existed, in the sense that the weights were out there, but the surrounding ergonomics had not arrived yet. There was no Hugging Face Hub. There was no from transformers import pipeline. There was a TensorFlow blog post or two with TODO-shaped instructions.

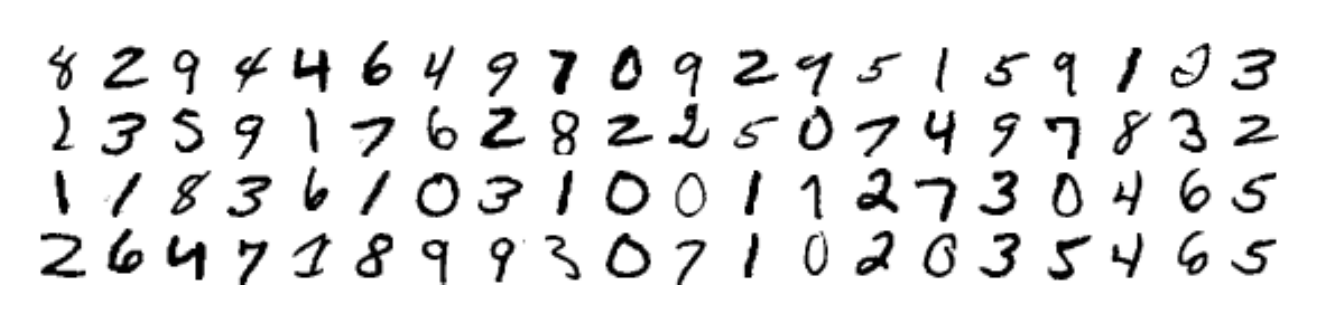

I didn’t know any of this. What I knew was that Andrew Ng had a series of YouTube videos, that TensorFlow had a Python API, and that there was a starter dataset called MNIST that everyone seemed to use. MNIST is 70,000 28×28 grayscale images of handwritten digits, labeled 0 through 9. It is the “hello, world” of computer vision, the way a Fibonacci function is the hello, world of recursion.

So before I tried to train a cat detector, I trained a digit classifier. The plan was that this would warm me up. The plan was that cats were close enough to digits that the lessons would transfer.

The plan was wrong, but in instructive ways.

The setup, 2017 edition

The GPU was a Tesla K80 sitting in an AWS p2.xlarge EC2 instance. It cost less than a dollar an hour. To set the scene, here is what I wrote in the study guide at the time, with all the wide-eyed enthusiasm of someone who had just discovered that you could rent serious compute by the hour:

For just a few dollars an hour, you can now access computational power equal to some of the world’s top supercomputers in 2006. Amazon Web Services (AWS) claims 70 teraflops (trillion floating point operations per second) on a single p2.16xlarge instance which includes 16 GPU cores, 64 vCPUs, and 732 GB RAM. And you can scale that out into a low-latency compute grid if you need more. Note that TensorFlow doesn’t automatically parallelize your model; you’ll need to specifically design your model to take advantage of more than one GPU.

I wasn’t using a p2.16xlarge. I rented the smallest variant, the p2.xlarge, with a single Tesla K80 advertised at 8.7 TFLOPS. The big instance was overkill for MNIST and I couldn’t afford it on a side-project budget anyway. The point of the quote is the framing: a GPU instance you could rent by the hour was, in 2017, the kind of compute that would have qualified as a top-500 supercomputer a decade earlier. Everyone working on ML at the time knew this and was a little dizzy from it. The frontier had become rentable. (Eight years later, the equivalent felt-impossible-now-trivial machine is the consumer Mac with enough RAM to run a 70B-parameter language model locally. The “wait, we can just do this?” feeling never really goes away; it just changes scope.)

There was a pre-baked “Deep Learning AMI” image you could launch and get TensorFlow, CUDA, and Jupyter pre-installed, which was important because installing CUDA from scratch on Ubuntu 14.04 was an exercise in spiritual development.

============================================

Deep Learning AMI for Ubuntu

============================================

ubuntu@ip-172-31-47-242:~$ cat /proc/driver/nvidia/version

NVRM version: NVIDIA UNIX x86_64 Kernel Module 367.57

ubuntu@ip-172-31-47-242:~$

The framework was TensorFlow 1.x, which used a “build a graph, then run a session” model that has since been ripped out of TensorFlow itself and replaced with the eager execution model that PyTorch had been doing all along. Every line of code in this post is from that era. I’m leaving it in TensorFlow 1.x because that is what the journey actually looked like, and because the shape of the loop is the same regardless of which framework you’d use today.

The smallest interesting training loop

Here is the first thing I trained. The whole program is about forty lines.

## mnist1.py

import tensorflow as tf

# download MNIST data from yann.lecun.com/exdb/mnist/

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

# create placeholder for 28x28 images

x = tf.placeholder(tf.float32, [None, 784])

# Weight & bias variables (28x28 = 784, 10 possible digits to classify)

W = tf.Variable(tf.zeros([784, 10]))

b = tf.Variable(tf.zeros([10]))

# implement the model using a softmax regression

y = tf.nn.softmax(tf.matmul(x, W) + b)

# implement the cross entropy function

y_ = tf.placeholder(tf.float32, [None, 10])

cross_entropy = tf.reduce_mean(-tf.reduce_sum(y_ * tf.log(y), reduction_indices=[1]))

# minimize cross-entropy using gradient descent algorithm

train_step = tf.train.GradientDescentOptimizer(0.5).minimize(cross_entropy)

# launch the model, initialize variables

sess = tf.InteractiveSession()

tf.global_variables_initializer().run()

# evaluate the model

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

# train batches of 100 (1,000 times total), making the gradient descent stochastic

for _ in range(1000):

batch_xs, batch_ys = mnist.train.next_batch(100)

sess.run(train_step, feed_dict={x: batch_xs, y_: batch_ys})

# print the accuracy of this model

print(sess.run(accuracy, feed_dict={x: mnist.test.images, y_: mnist.test.labels}))

Forty lines is enough to train a model. It is worth pausing on what each block is doing, because almost every concept that matters at scale shows up here in miniature.

The 28×28 images get flattened into 784-dimensional vectors. The model is a single matrix multiplication: take the input vector x, multiply by a 784×10 weight matrix W, add a 10-vector of biases b. The output is 10 raw numbers, one per digit class, with no particular shape or scale.

Softmax is what turns those 10 raw numbers into a probability distribution. It exponentiates each one and divides by the sum of all the exponentials, so the result is 10 positive numbers that sum to 1. The largest input gets the largest probability, and the exponential amplifies the relative gaps so the network can express genuine confidence in a particular class rather than spreading probability uniformly. Softmax is the standard last layer of essentially every classifier you have ever used, and the same operation sits at the end of an LLM’s next-token head, turning a vector of scores over the vocabulary into the distribution you sample from. It has no learnable weights of its own. It is just a fixed function that reshapes the readout into a valid probability distribution, which is why most diagrams draw it as an annotation on the output layer rather than as a layer in its own right.

That is the entire model. A linear projection from pixels to class scores, followed by softmax. No hidden layers. No deep stack of nonlinearities. The weights happen to be initialized to all zeros here, which would be a terrible idea for any deeper architecture but is fine for this one because the input data is varied enough that the very first gradient step pushes each weight to a different value.

The loss function is cross-entropy, which is the standard scalar error signal for classification and pairs naturally with softmax. Concretely, for a single training example whose correct class is c, cross-entropy is just minus the log of the probability the model assigned to c. If the model said “definitely a 7” and the answer was 7, the probability is close to 1 and the log is close to 0, so the loss is tiny. If the model said “probably a 3” and the answer was 7, the probability assigned to 7 is small, the log is a large negative number, and the loss is large. The training objective is to make this number go down.

The optimizer is plain stochastic gradient descent with a learning rate of 0.5. On each batch of 100 examples, TensorFlow computes the gradient of the loss with respect to every parameter in the model (via backpropagation, which is just the chain rule from calculus applied mechanically through the computation graph), and updates each parameter in the opposite direction of its gradient, scaled by 0.5.

We run that loop 1,000 times. Each iteration sees 100 randomly-sampled training examples. The fact that we use a small random subset instead of the full training set on every step is what the “stochastic” in stochastic gradient descent means. It is faster and, surprisingly, it generalizes better.

A vocabulary note. One full pass through the training set is called an epoch. The MNIST training set has 55,000 images, and at a batch size of 100, one epoch is 550 iterations. So 1,000 iterations is less than two epochs. Each training example gets seen, on average, fewer than two times before we stop. That’s not much. The conventional wisdom is that you want to make many passes over the data, tens or hundreds of epochs, watching the validation loss flatten out before you call it done. People talk about training in epochs because it factors out the batch size: “train for 50 epochs” means the same thing whether your batch size is 32 or 1024, even though the iteration count differs by 32×.

Output from that 2017 run, after about ten seconds on the K80:

0.9202

92% test accuracy. The terminal printed it in red, which I took as a triumphant color and which the TensorFlow examples meant as a warning. 92% is, in fact, terrible for MNIST. The state of the art in 2017 was already above 99%. The state of the art in 2000 was already above 99%. A linear model can get 92% on MNIST and that is more or less where its ceiling lives.

Watching the numbers tick by

I have given you the final accuracy from that first run and skipped what is, day to day, the most informative part of any training session: the stream of numbers that scrolls past while the script is still running.

A real training script does not print a single number at the end. It prints something every N steps so you can watch the model improve, or fail to improve, in real time. Adding that to the loop is one if-statement:

for i in range(10000):

batch = mnist.train.next_batch(100)

if i % 1000 == 0:

train_accuracy = accuracy.eval(feed_dict={x: batch[0], y_: batch[1]})

print("step %d, training accuracy %g" % (i, train_accuracy))

train_step.run(feed_dict={x: batch[0], y_: batch[1]})

Re-run, and the terminal looked like this back on the K80 in 2017:

step 0, training accuracy 0.12

step 1000, training accuracy 0.89

step 2000, training accuracy 0.87

step 3000, training accuracy 0.89

step 4000, training accuracy 0.85

step 5000, training accuracy 0.95

step 6000, training accuracy 0.86

step 7000, training accuracy 0.93

step 8000, training accuracy 0.9

step 9000, training accuracy 0.92

0.9158

Read that the way you would read it live, one line every few seconds, watching it scroll. At step 0 the model has 12% accuracy on a random batch, which is essentially chance for ten classes. By step 1000 (roughly two epochs through the dataset) it has jumped to 89%. Then 87, 89, 85, 95, 86, 93, 90, 92, and a final test accuracy of 91.58% across all 10,000 held-out examples. Notice the training accuracy is noisy. It does not climb monotonically. Some batches happen to be easier than others, and the number you see at any given step is the score on whichever 100 examples the model just looked at. Plot it and you get a jagged line that climbs in aggregate but jitters everywhere along the way.

This stream is where ML work actually lives. You write some code. You hit run. A long bunch of numbers flows by on the terminal. After enough of these you start to read the shape: a healthy run climbs steeply, then plateaus; a stuck one bounces around one value for tens of thousands of steps; a broken one drifts off into NaN somewhere around step 800. You stop needing the final test number to know whether the experiment worked.

Then you act on what you saw. You kill the job. You change a hyperparameter, a learning rate, a layer width, an initialization. You hit run again. Another long bunch of numbers flows by. You compare its shape to your memory of the previous run, learn something, change a few more lines, run it again. Lather, rinse, repeat. That is the rhythm of training, and it is the loop every one of the “knob” sections below was, in real life, an instance of: write some code, watch a long bunch of numbers flow by, learn something, change a few lines, watch the next long bunch of numbers flow by.

The wall-clock speed of that loop matters more than people give it credit for. When the run takes ten seconds, you iterate freely and try fifteen things in an afternoon. When it takes ten minutes, you slow down and pick your experiments. When it takes ten hours, you stop experimenting and start writing meeting agendas about which experiment to greenlight. MNIST on a K80 was firmly in the ten-second regime. Most of what follows in this post was a steady migration away from that, toward longer streams and slower iteration, which is also the migration most ML practitioners follow over the course of a career.

Knob one: train longer

This is the moment you wave your hands and say “well, give it more time, then.” So I did.

for _ in range(100000): # was 1000

A hundred thousand iterations instead of a thousand. At 550 iterations per epoch, that’s roughly 180 full passes through the dataset. The K80 chugged for several minutes. The first taste of a training run that wasn’t instant. I made coffee. I watched the iterations tick by. I had begun the long apprenticeship of waiting for training jobs, which, eight years later, I am still serving.

0.9238

A third of a percentage point. The loss curve had flattened out long before. More training is not what’s missing.

This is the first lesson of every training run: when the model is at its capacity ceiling, more compute does nothing. The thing you change has to actually move the bottleneck. Eight years later, this is still the single most common mistake I see in training reports: a graph showing slow improvement and a recommendation to “let it cook longer” when the right action is to change the architecture, the data, or the objective.

Knob two: a better optimizer

Plain SGD with a hand-tuned learning rate is a blunt instrument. It treats every parameter the same and depends entirely on you picking the right global step size. Adam is one of a family of “adaptive” optimizers that keep per-parameter momentum and per-parameter learning-rate scaling. In practice it converges faster and is dramatically less sensitive to the learning rate you pick.

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

One line changes. Re-run.

0.9288 # 100,000 steps

0.9281 # 200,000 steps

That’s it. Same ceiling. Adam gets there faster and more reliably, but the ceiling was never about the optimizer. The lesson generalizes: optimizer choice matters for how you reach a given quality level, not for where the quality level is.

So if the optimizer isn’t the bottleneck and more training isn’t the bottleneck, what is? The model itself. A single matrix multiply isn’t deep enough to represent the structure that distinguishes a sloppy 3 from a sloppy 8. We need a deeper network. More specifically, we need a different kind of network.

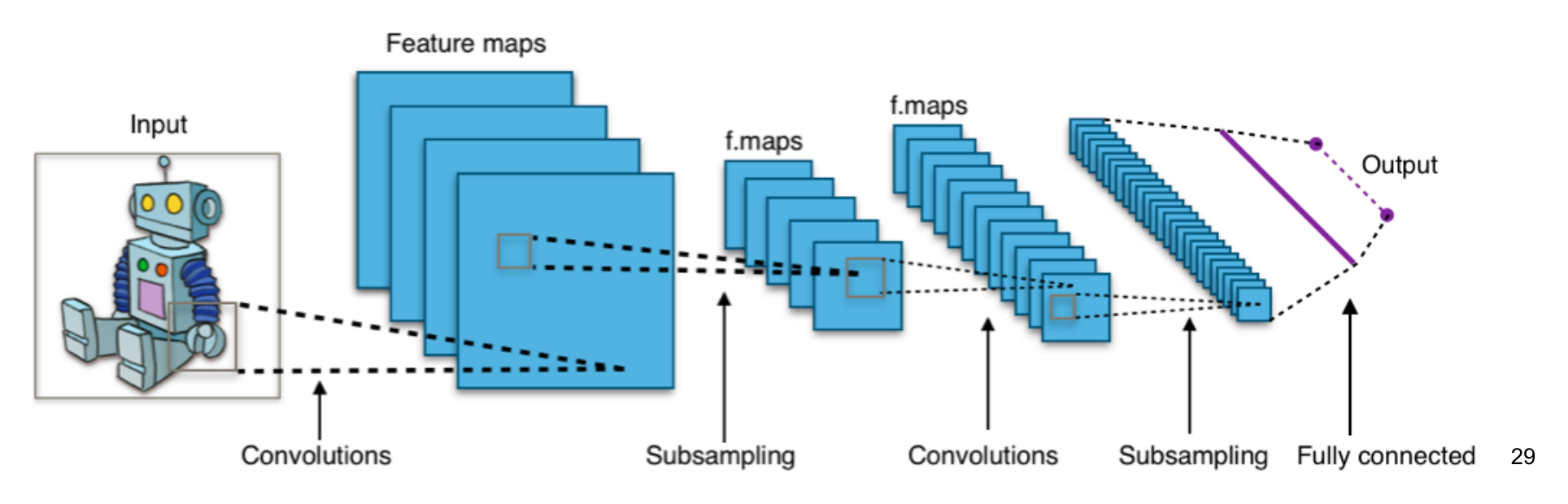

Knob three: convolutions

The model that broke through 99% accuracy on MNIST wasn’t deeper in the dumb sense of “more matrix multiplies.” It was structurally different. It used convolutional layers.

Here is the problem a convolutional layer is built to solve. A fully-connected layer treats every input pixel as independent of every other input pixel. It has no idea that pixel (4, 5) is next to pixel (4, 6). For a 28×28 MNIST image flattened to a 784-vector, the network has to discover from scratch that locality matters. For a full-color 224×224 photograph (the kind of input you would actually want for cat detection), flattening produces a 150,528-vector, and a single fully-connected layer with even a modest 1024 hidden units would have 150 million weights. That is a parameter explosion before the network has done anything useful.

A convolutional layer encodes the locality you want into the architecture itself. You define a small filter (say, 5×5 pixels with one learnable weight per pixel) and slide that filter across every position in the input image. At each position, the layer computes a dot product between the filter and the patch of image underneath it, producing a single number. The result is a new 2D image (an activation map or feature map) whose pixels light up where the original image contains whatever pattern the filter is tuned to detect. A vertical-edge filter produces a feature map that lights up along vertical edges. A diagonal-stroke filter produces one that lights up along diagonal strokes. The same 25 weights are reused at every spatial position, so the parameter count is comically small compared to the fully-connected equivalent, and translation invariance is built in for free: a cat in the top-left activates the same filter the same way as a cat in the bottom-right.

The deep trick is that the filter weights are learned. You do not tell the network “look for edges, look for corners”; the network discovers, by gradient descent on the loss, the filters that happen to make its predictions less wrong. Stack several of these layers, and something remarkable falls out. Early layers learn to detect low-level features (edges, color blobs, gradients). Middle layers learn combinations of those (curves, corners, simple shapes). Late layers learn combinations of those (textures, parts, whole objects). Nobody designs the hierarchy. It emerges from the training objective. The first time you visualize what each layer is actually doing, it feels like watching a magic trick.

Convolution captures spatial pattern. Subsampling (also called pooling) is the second move: take each 2×2 neighborhood in a feature map and keep only the strongest activation, discarding the rest. This shrinks the tensor by 4× and makes the representation more tolerant to small shifts in the input. It also lets the next convolution see a wider effective receptive field: a 5×5 filter after one pooling step effectively covers a 10×10 region of the original image. After two or three rounds of convolve-then-pool, the spatial dimensions are small enough that you can flatten the result into a vector and feed it into a couple of plain fully-connected layers that do the final classification.

The code is longer but no harder to read:

## mnist2.py (excerpt)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1],

padding='SAME')

# Convolutional layer #1: 32 filters, 5x5 each

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

x_image = tf.reshape(x, [-1, 28, 28, 1])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

# Convolutional layer #2: 64 filters, 5x5 each

W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

# Fully connected layer: 1024 neurons

W_fc1 = weight_variable([7 * 7 * 64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 7 * 7 * 64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

# Readout layer (10 classes)

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

y_conv = tf.matmul(h_fc1, W_fc2) + b_fc2

The non-linearity between layers is tf.nn.relu, the rectified linear unit, which is just max(0, x). ReLU is shockingly effective: it is cheap to compute, its gradient is either 0 or 1, and it doesn’t suffer from the vanishing-gradient problem that killed sigmoid networks. When the community switched to ReLU around 2012, networks suddenly trained an order of magnitude faster.

Re-train, with the Adam optimizer and 1,000 steps:

test accuracy 0.9725

97% after one-tenth as many steps as the linear model. With 10,000 steps (about 18 epochs), the stream looked like this:

step 0, training accuracy 0.15

step 1000, training accuracy 0.96

step 2000, training accuracy 1

step 3000, training accuracy 1

step 4000, training accuracy 1

step 5000, training accuracy 0.99

step 6000, training accuracy 1

step 7000, training accuracy 1

step 8000, training accuracy 1

step 9000, training accuracy 1

test accuracy 0.9898

Compare that to the bouncing 0.85-to-0.95 stream from the linear model and you can see, in the shape of the numbers alone, that something fundamentally different is happening. The model is not gradually scraping its way toward a ceiling. By step 2000 it has already pinned the training batches to 100% accuracy and is mostly refining its general grasp from there. This is the visual signature of a network that has the structural capacity to fit the task. It is also the visual warning sign of overfitting, which we will fix in a moment.

100,000 steps, about 180 epochs:

test accuracy 0.9922

By this point the training run was taking close to an hour on the K80. That felt like an eternity at the time, and it is comically short by modern standards. People train frontier LLMs for weeks or months, and production CV models for many days. The interval between “submit job” and “see result” shapes the entire rhythm of how you do ML work, and learning that rhythm (knowing when you can iterate freely versus when you have committed to a multi-day run) is most of what separates the experienced ML engineer from a beginner. The dial labeled “patience required” gets a workout that the textbooks rarely mention.

This is also what a working architecture feels like. The same training procedure that was stalled at 92% with a linear model walks past 99% with two convolutional layers and a fully-connected head. The architecture, not the optimizer or the training duration, is what moved the ceiling.

Knob four: dropout

99.22% is good. It is good enough that you start worrying about a different problem: overfitting. The training accuracy was 100% well before the test accuracy peaked. The network had begun to memorize the training set, not just the underlying pattern.

The 2014 trick for this was dropout: during training, randomly zero out half the activations in a given layer on every step. The network can no longer rely on any specific neuron being available, so it learns redundant representations that generalize better. At inference time, you turn dropout off; the redundancy stays, the network is now more robust.

keep_prob = tf.placeholder(tf.float32)

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

y_conv = tf.matmul(h_fc1_drop, W_fc2) + b_fc2

With dropout, MNIST gets to 99.35%. The improvement is small because we weren’t overfitting much. The dataset is too easy. Dropout’s effect grows with model size and dataset complexity. Today most modern architectures use it in some form, often alongside batch normalization and weight decay, all of which are different cuts at the same problem of “stop the network from memorizing.”

The cat wall

So I had a working digit classifier. I had passed every intermediate test the tutorial set for me. The state of the art on MNIST, in 2017, was less than half a percentage point ahead of what I had trained in a few hundred lines of code on a $0.90/hour GPU. Time, surely, to do cats.

I sat with this for a while and realized that I had no idea how to do cats.

The MNIST recipe doesn’t generalize, and the reasons why are the most useful thing I learned. Digits are 28×28 grayscale. Cats are full-resolution color photographs of arbitrary aspect ratio, with cats at arbitrary scale, pose, and position. Digits are a closed-world ten-class problem with a clean labeled training set already prepared and waiting. Cats live in a world where the closest dataset to what I wanted was the ImageNet “domestic cat” subset, and even that had been curated by someone with an opinion about what counts.

The architectural step from “two-layer CNN” to “production-quality image classifier” is also enormous. The networks that worked on real images had names: AlexNet, VGG, Inception, ResNet. ResNet introduced skip connections specifically to make very deep networks (50, 100, 150 layers) trainable at all. None of this was discoverable from MNIST. The MNIST CNN gave me the vocabulary but not the depth.

What the journey actually taught

I never trained the cat detector. I never even started. And in retrospect that is the most useful thing about the project, because it taught me, viscerally, what I now think of as the four real facts about training:

1. Training is not a recipe; it is a search. The model starts as random numbers. Every step, the gradient pushes the random numbers toward less-bad numbers. The “training run” is the path through parameter space that this push describes. When people talk about “training instability” or “loss spikes” or “the model didn’t converge,” they are talking about the geometry of that search. Knowing that the search exists, that it’s a real object with a shape, changes how you read every fine-tuning report.

2. The biggest knob is the architecture. Optimizers, learning rates, batch sizes, regularization. These are second-order. The thing that decides whether you can reach 99% on your task is whether the network has the structural capacity to represent the function you want. Most “we tried hyperparameter sweeps and didn’t move the metric” stories are stories about hitting the architectural ceiling and not realizing it.

3. Most of the work is data. I never got past the “stuff goes here” stage of the cat project because data is the part nobody warns you about. The actual ratio of effort, on a from-scratch ML project, is something like 70% data work, 20% training infrastructure, 10% model code. Foundation models have changed this somewhat by absorbing the data cost into pre-training, but for any task that requires fine-tuning, the data ratio is largely the same. The reason fine-tuning often “doesn’t work” is almost always a data problem dressed up as a training problem.

4. The optimizer’s job is to make a real model. Your job is to make sure the real model is the right one. The optimizer is genuinely good at minimizing whatever loss you wrote down. If the loss is wrong (wrong objective, wrong proxy for what you care about, wrong distribution of training examples), the optimizer will faithfully produce a model that minimizes the wrong thing. This is the verification problem at the bottom of the stack: a training loop is a faithful executor of whatever you point it at.

You probably don’t need to do this

It would be cruel to end without saying the obvious thing, which is that in 2026 you almost certainly should not train an image classifier from scratch. ResNet-50, EfficientNet, MobileNet, the various vision transformers, are sitting on Hugging Face with permissive licenses, pre-trained on ImageNet-scale data, ready to be fine-tuned on your specific task in an hour. The amount of compute that went into producing those weights is on the order of thousands of GPU-days. You are not going to outperform them with a from-scratch run on a single instance.

The same is true, more emphatically, for text. Nobody is training a frontier-grade LLM in a weekend project. The whole point of foundation models is that the heavy training has been amortized. Your job, downstream, is to specialize the existing weights to your domain, evaluate carefully, and deploy.

So why bother understanding what’s underneath?

Because the underneath does not go away. When your fine-tune doesn’t converge, the question is which knob you turn, and the answer depends on whether you understand whether you’re fighting an architectural ceiling, a data problem, an optimizer problem, or a loss-function problem. When your model gives confident wrong answers, the question is whether the training data taught it to be confident about exactly that wrong answer, and that requires being able to think about what the loss was actually rewarding. In 2026, you choose between adapters, full fine-tuning, instruction tuning, RLHF variants, or just better prompting. You are choosing between different ways of moving a known set of weights through parameter space. Knowing the shape of that space helps.

References:

- LeCun, Y., Bengio, Y., Hinton, G. (2015). Deep Learning. Nature. The canonical overview from three of the field’s founders.

- Krizhevsky, A., Sutskever, I., Hinton, G. (2012). ImageNet Classification with Deep Convolutional Neural Networks. The AlexNet paper. The point at which CNNs became the default for image classification.

- He, K., et al. (2015). Deep Residual Learning for Image Recognition. ResNet. The skip-connection trick that made very deep networks trainable.

- Srivastava, N., et al. (2014). Dropout: A Simple Way to Prevent Neural Networks from Overfitting. The original dropout paper.

- Kingma, D., Ba, J. (2014). Adam: A Method for Stochastic Optimization. The Adam optimizer paper.

- Nielsen, M. Neural Networks and Deep Learning. The clearest free book on what backpropagation is actually doing.

- 3Blue1Brown. Neural networks playlist. If you want the gradient-descent visualization burned into your retinas, watch these.